Coaching Claude

by Henrik Holen on Tue Feb 17 2026

There’s a question doing the rounds that trips up the LLMs. “I want to wash my car and the car wash is 100 metres away. Should I drive or walk?” Claude says walk. ChatGPT says walk. They both give perfectly sensible reasons about short distances and fresh air, and they both miss the obvious: you need to bring the car.

It’s funny, but the failure is more interesting than the joke. The model processes each fact correctly in isolation. 100 metres is short, walking is healthy, car washes clean cars. It just can’t zoom out and see the physical reality of the situation. Each part makes sense on its own. The whole is missed entirely. I’ve been running into the same problem.

From language to something visceral

When you delegate to a person, they eventually absorb your standards. They develop their own judgement, and over time they need less direction. When you delegate to AI, the learning doesn’t live in the agent. It lives in the artefacts you build around it, the guidelines, frameworks, and process docs. Every time you refine a style guide because the output keeps missing something, you’re encoding something into the system.

The thing is, AI tools are very good at producing a professional baseline. Clean, competent, polished. The kind of output that used to cost real money. But there’s a level above that, the level where real companies actually publish things, and it’s learnable. Not because it requires genius, but because it follows patterns you can observe and extract.

I’ve been testing this with video, which turns out to be a hard version of the problem. I’m building a production pipeline using Claude and Remotion, and the first thing we did was look at what good actually looks like. We pulled apart product videos from companies that ship polished work, frame by frame, looking at how they use typography, timing, interaction, and motion. Not to copy any one style, but to understand what separates a video that feels real from one that feels generated. The principles came from observing what looked good, or at least what people like.

With language-based work, delegation is comfortable because both you and Claude are fluent. You can point to a sentence and say “this is too long” or “this repeats the section above” and get something useful back. Video breaks that. It has an emotional, visceral quality where waving your hand at the screen and saying “this doesn’t feel right” is valid feedback, but useless as an instruction.

Claude’s default output sits squarely at the professional baseline. Small elements, tastefully arranged, more like actual UI than a marketing video. The kind of thing that looks fine in a screenshot and is dull in motion.

The car wash problem, applied to design

Three versions of the same limitation keep showing up.

The first is bold vs safe. Claude makes things small and constrained when the medium demands big and punchy. You can push it there, but if you show it a bold example, it copies the example. Next time you get the same layout, the same energy, the same moves. You haven’t taught it to be bold. You’ve taught it one specific way to be bold.

The second is principles vs patterns. Give Claude a reference video and it copies the pixels. Getting it to absorb the principle behind the reference, to understand why that choice works and when to apply it, is a different order of difficulty. The goal isn’t twenty videos that look the same. It’s twenty videos expressing different styles that all look good.

The third is forest vs trees. Claude evaluates each element in isolation and says it looks good, but it doesn’t see how the elements relate to each other, how the composition feels as a whole, or whether the pacing builds tension or just fills time. Each fact is correct, and the obvious thing is invisible.

One example: Claude kept rendering product features as static dashboard screenshots. Technically accurate, visually dead. The fix was a single principle in the process doc: show the interaction, not the result. The next render had a cursor moving through the UI, elements responding to clicks, the feature coming alive. Same information, completely different feel. That’s the kind of thing the system needs to learn, not as a rule to copy but as a way of thinking about how to show things.

Two terminals

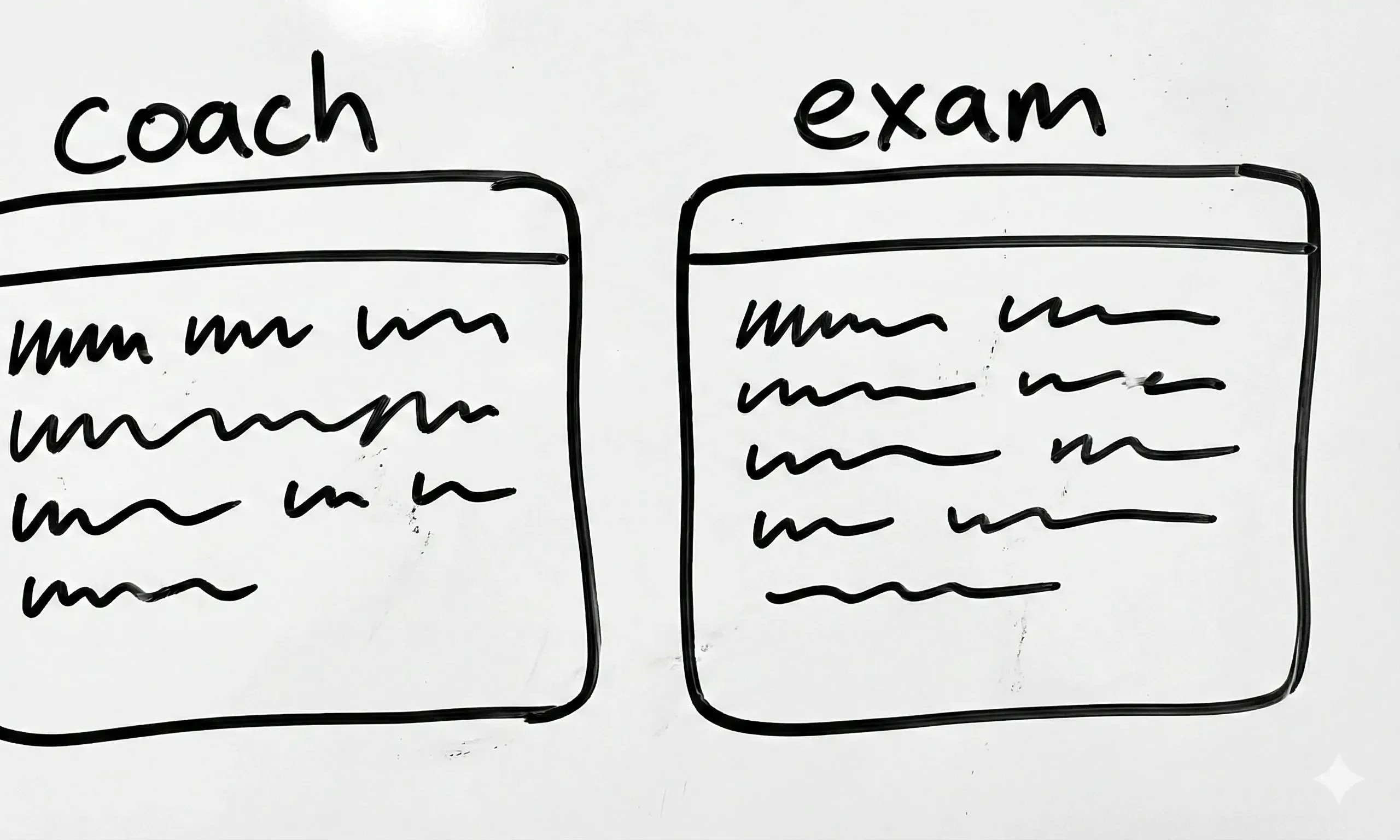

This is still very much experimental, not a finished workflow. But the setup I’ve landed on for testing is straightforward. One terminal runs the training agent, the Claude session with all the accumulated context, the one I’m actively coaching. The other runs a fresh agent with zero prior context, just the process docs. The question is whether the documentation alone is enough for a fresh agent to produce good work.

If it can’t, the docs aren’t good enough. So you fix the docs, not the video, and run the test again. Each cycle tightens the loop (or occasionally goes way off the rails). When the fresh agent defaults to safe and small, you add a principle like “demonstrate, don’t display.” When it copies a reference literally, you add guidance on extracting principles from examples rather than mimicking them. When it evaluates elements in isolation, you add composition rules that force it to consider the whole frame.

Of course, the fresh agent isn’t the final judge. A human still looks at the output and reacts. The system doesn’t remove the gut check, it just gets to something worth reacting to faster. Three iterations instead of thirty.

The interesting discovery is that you’re not really making videos. You’re figuring out whether craft can live in documentation. Whether the work of studying what good looks like, pulling it apart, and writing it down can mean that the next person, or the next agent, doesn’t have to start from scratch.

Where language runs out

The goal isn’t a system that replicates videos. It’s a system where you describe what you want, iterate a few times, and get something that expresses your vision well. The process docs aren’t encoding a specific aesthetic, they’re trying to encode the ability to listen, interpret, and deliver.

That’s what makes this slower work worth doing. The heavy lifting of observation, the frame-by-frame analysis, the iterative doc-fixing, that happens once. If it works, anyone can drop in a brief and get craft-level output without the weeks of study that produced the system.

Most AI delegation right now is prompting, one-off instructions for one-off outputs. What I’ve been working might be more akin to programming, systemic constraints that shape how the AI approaches any brief, not just mine. The shift from “do this specific thing” to “here’s how to think about this kind of thing” is where the interesting work is, and it starts the moment you push past language into territory where the feedback is visceral and the quality is harder to pin down.

Subscribe to my newsletter

Get my latest thoughts on product and strategy delivered to your inbox.